Abstract

1. Introduction

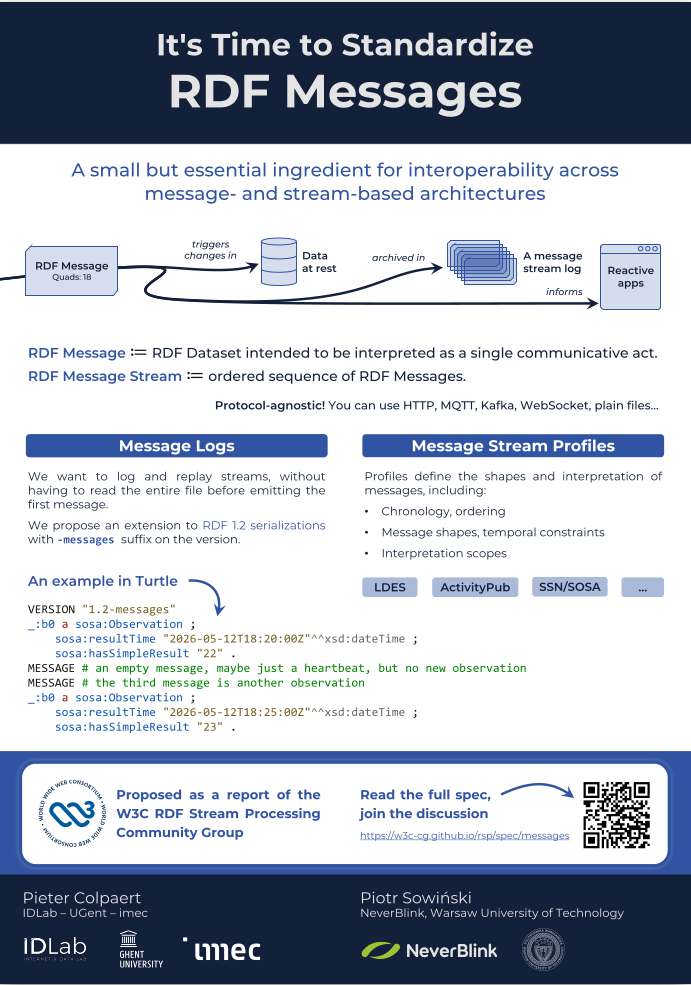

The deployment context of RDF systems has shifted beyond static publication and batch-oriented querying. Contemporary systems operate in event-driven and streaming settings, where information is produced, transmitted, and consumed continuously. Examples include IoT sensor networks, activity feeds such as ActivityPub, knowledge graph updates, benchmark stream distribution, Trustflows, Nanopublications, or returning multiple results when evaluating SPARQL CONSTRUCT queries. In these environments, we need a standard understanding of how RDF statements are grouped as units of communication.

A persistent practical issue is that RDF's standard semantics and its mainstream processing ecosystem are largely agnostic to message boundaries. RDF graphs and datasets are typically treated as collections of statements that can be merged, reordered, and partitioned without semantic effect. This is appropriate for many knowledge representation tasks, but it becomes problematic when the producer intends a specific grouping of triples or quads to be interpreted atomically as a single communicative act, for instance: "this set of statements constitutes one observation", "this is one update in a stream", or "this is one query result".

When explicit boundaries are absent, consumers often rely on transport-level assumptions, such as treating newlines as delimiters or using a streaming protocol such as WebSockets where messages are already present at the transport layer. Alternatively, consumers may apply heuristics to reconstruct the intended message, such as Concise Bounded Description or ad hoc uses of vocabulary constructs and storage features. Such approaches are non-standard patchwork that will break once messages need to travel across multiple systems and different sets of tooling.

A commonly suggested workaround is to use named graphs as the grouping mechanism. However, this conflates two separate concerns: scoping statements for dataset semantics versus grouping statements for communication. Named graphs provide a way to partition statements within an RDF dataset, whereas messages capture what a producer intends to communicate as one exchange unit. For example, a single message may contain statements in multiple named graphs and in the default graph; conversely, a message may even be empty, for example to represent a heartbeat or keep-alive event. The following example replicates how named graphs are used in event-oriented streaming Linked Data systems.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

@prefix ex: <https://example.org/ns#> .

@prefix prov: <http://www.w3.org/ns/prov#> .

@prefix sosa: <http://www.w3.org/ns/sosa/> .

@prefix xsd: <http://www.w3.org/2001/XMLSchema#> .

_:messageContext

prov:generatedAtTime "2026-01-30T10:05:34Z"^^xsd:dateTime .

_:messageContext {

ex:Observation1

a sosa:Observation ;

sosa:resultTime "2026-01-30T09:52:30Z"^^xsd:dateTime ;

sosa:hasSimpleResult 21.4 .

}

Either way, named graphs also do not solve a performance problem. In RDF, the order of RDF statements does not carry semantic meaning. As a consequence, statements belonging to the same named graph or message may appear far apart in a serialization, and extracting one complete graph or message may require parsing the full input first.

In this paper, we argue that message grouping must be made explicit as a first-class interoperability concern for RDF-based streaming and event-driven systems. The next section introduces our proposal of RDF Messages, a draft report in the RDF Stream Processing Community Group. The current specification is available at w3c-cg.github.io/rsp/spec/messages.

2. RDF Messages

An RDF Message is an RDF Dataset that is intended to be interpreted atomically as a single communicative act. The concept does not change the RDF semantics of the statements inside the dataset. Rather, it makes explicit that a particular grouping of statements belongs together as one message for communication, storage, and processing purposes. An RDF Message may also be empty, for example to represent a keep-alive event.

Building on this concept, the paper defines an RDF Message Stream as an ordered sequence of RDF Messages, potentially unbounded, transmitted from one system to another. Furthermore, it defines an RDF Message Log as a replayable representation of such a stream that preserves message order and boundaries. A stream is consumed as it is produced, whereas a log allows the same sequence of messages to be archived, exchanged, replayed, or processed again later.

By default, each RDF Message is only asserted in its own context. Therefore, RDF Messages should not automatically be interpreted as if all statements from all messages were asserted in one global dataset. Instead, each message forms its own unit of communication and interpretation. A consumer or stream processor may derive downstream assertions from a message, but that step depends on the application and its operational setting. This keeps the core concept of RDF Messages compatible with existing RDF semantics while avoiding the ambiguity that arises when messages are implicitly merged. For example, one message may indicate that the radio is playing song A, while another message indicates that the same radio is playing song B. This is not a contradiction, as these messages refer to different contexts, that is, different moments in time.

Blank node identifiers in RDF Message Streams and RDF Message Logs are scoped to the message in which they occur. This allows processors to handle long-running streams incrementally, without having to preserve blank node identifiers across message boundaries or risk identifier collisions. When applications need to refer to the same resource across multiple messages, they can still use skolemization.

The core notion of RDF Messages is intentionally minimal: it does not prescribe one fixed way to identify messages, attach timestamps, express delivery semantics, or define how message contents should affect downstream state. These aspects depend on the application domain and are therefore better specified separately. The authors foresee RDF Message Profiles to define how messages can be structured and interpreted in a given setting. Such profiles may constrain the shape and ordering of messages, define how the chronology of a stream is determined, specify versioning rules, define hypermedia controls for paginating a log, describe transaction boundaries, define how changes are to be applied, or indicate retention policies.

These are features that can already be expressed using Linked Data Event Streams, and can be partially

supported by specifications like PROV-O, ActivityStreams, SSN/SOSA, or SAREF. For the earlier example, one

could define a PROV-O RDF Message profile specifying that prov:generatedAtTime defines the

chronological order, and maybe even configure that sosa:resultTime defines the version order.

RDF Messages do not by themselves prescribe a concrete syntax. To exchange, archive, or replay RDF Messages, serializations need a way to preserve message boundaries explicitly. RDF 1.2 introduces version announcement mechanisms, which the proposal uses as a hook to indicate that a document uses message-aware syntax. This allows a parser to know from the start that it should preserve message boundaries while reading the input.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

# Proposed Turtle-like RDF Message Log syntax

VERSION "1.2-messages"

@prefix sosa: <http://www.w3.org/ns/sosa/> .

@prefix xsd: <http://www.w3.org/2001/XMLSchema#> .

_:b0

sosa:resultTime "2026-05-12T18:20:00Z"^^xsd:dateTime ;

sosa:hasSimpleResult 22 .

MESSAGE # an empty message, e.g., a heartbeat

MESSAGE # the third message is another observation

_:b0

sosa:resultTime "2026-05-12T18:25:00Z"^^xsd:dateTime ;

sosa:hasSimpleResult 23 .

_:b0 is reused in the first and third messages because blank node identifiers

are scoped per message.

This is only one way to serialize RDF Message Logs. The proposal also supports adoption in other serializations, such as YAML-LD, JSON-LD, or Jelly-RDF, which already has the notion of frames, as well as in ecosystems such as types in the RDF-JS TypeScript ecosystem.

3. Use Cases

Internet of Things. IoT devices emit streams of discrete messages, for example temperature observations from a thermometer. While ontologies like SOSA/SSN allow one to describe such observations, the messaging mechanism remains undefined. RDF Message Streams provide the interoperability basis for such streams, needed for unambiguous interpretation of IoT data.

Event-driven knowledge graph updates. Microservices frequently emit patch-like RDF payloads representing updates. RDF Messages can represent each update dataset as an atomic message, while allowing deployments to choose whether the update semantics are interpreted as INSERT/DELETE operations, out-of-band, or as domain-level events, in-band. A real-life example of this is the Nanopublication Network, which continuously replicates streams of new Nanopublications, each being an RDF Dataset, across services.

SPARQL CONSTRUCT results as units. When answering a SPARQL CONSTRUCT query, SPARQL endpoints return the triples that can be used by a consumer to reconstruct the answers. The proposal uses RDF Messages so that the consumer can simply understand from the serialization which triples belong to one specific result. This enables more granular reuse of SPARQL CONSTRUCT results in query clients.

Archiving and replaying of RDF streams. Archiving continuous RDF output as a log is common for analytics, debugging, and reproducibility. Without message boundaries, archives become ambiguous: replay may emit different segmentations than the original, affecting downstream consumers that rely on atomic events. RDF Message Logs address this by storing each dataset as a record with an explicit boundary and optional offset or time metadata.

4. Conclusion

RDF Messages offer a simple and standardizable abstraction, while leaving use case-specific interpretation to profiles built on top. As this proposal is being developed within the W3C Community Group on RDF Stream Processing, the authors invite comments, implementations, participation, and broader community support to help refine the specification and move toward convergence on a shared approach for RDF Messages.

The current specification is available at w3c-cg.github.io/rsp/spec/messages.

An online discussion

The poster